Moore’s Law imposes a design challenge: How to make effective use of ever-increasing numbers of transistors without breaking the bank on power consumption? Simply packing in more instances of the same components is not always the answer. Often, a more productive approach is to move easily encapsulated, math-intensive operations into hardware.

The Xbox One (Durango) GPU includes a number of fixed-function accelerators. Move engines are one of them.

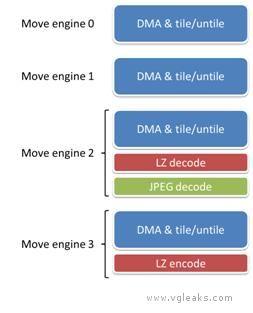

Xbox One (Durango) hardware has four move engines for fast direct memory access (DMA)

This accelerators are truly fixed-function, in the sense that their algorithms are embedded in hardware. They can usually be considered black boxes with no intermediate results that are visible to software. When used for their designed purpose, however, they can offload work from the rest of the system and obtain useful results at minimal cost.

The following figure shows the Durango move engines and their sub-components.

The four move engines all have a common baseline ability to move memory in any combination of the following ways:

- From main RAM or from ESRAM

- To main RAM or to ESRAM

- From linear or tiled memory format

- To linear or tiled memory format

- From a sub-rectangle of a texture

- To a sub-rectangle of a texture

- From a sub-box of a 3D texture

- To a sub-box of a 3D texture

The move engines can also be used to set an area of memory to a constant value.

DMA Performance

Each move engine can read and write 256 bits of data per GPU clock cycle, which equates to a peak throughput of 25.6 GB/s both ways. Raw copy operations, as well as most forms of tiling and untiling, can occur at the peak rate. The four move engines share a single memory path, yielding a total maximum throughput for all the move engines that is the same as for a single move engine. The move engines share their bandwidth with other components of the GPU, for instance, video encode and decode, the command processor, and the display output. These other clients are generally only capable of consuming a small fraction of the shared bandwidth.

The careful reader may deduce that raw performance of the move engines is less than could be achieved by a shader reading and writing the same data. Theoretical peak rates are displayed in the following table.

| Copy Operation | Peak throughput using move engine(s) | Peak throughput using shader |

| RAM ->RAM | 25.6 GB/s | 34 GB/s |

| RAM ->ESRAM | 25.6 GB/s | 68 GB/s |

| ESRAM -> RAM | 25.6 GB/s | 68 GB/s |

| ESRAM -> ESRAM | 25.6 GB/s | 51.2 GB/s |

The advantage of the move engines lies in the fact that they can operate in parallel with computation. During times when the GPU is compute bound, move engine operations are effectively free. Even while the GPU is bandwidth bound, move engine operations may still be free if they use different pathways. For example, a move engine copy from RAM to RAM would not be impacted by a shader that only accesses ESRAM.

Generic lossless compression and decompression

One move engine out of the four supports generic lossless encoding and one move engine supports generic lossless decoding. These operations act as extensions on top of the standard DMA modes. For instance, a title may decode from main RAM directly into a sub-rectangle of a tiled texture in ESRAM.

The canonical use for the LZ decoder is decompression (or transcoding) of data loaded from off-chip from, for instance, the hard drive or the network. The canonical use for the LZ encoder is compression of data destined for off-chip. Conceivably, LZ compression might also be appropriate for data that will remain in RAM but may not be used again for many frames—for instance, low latency audio clips.

The codec employed by the move engines is LZ77, the 1977 version of the Lempel-Ziv (LZ) algorithm for lossless compression. This codec is the same one used in zlib, glib and other standard libraries. The specific standard that the encoder and decoder adhere to is known as RFC1951. In other words, the encoder generates a compliant bit stream according to this standard, and the decoder can decompress certain compliant bit streams, and in particular, any bit stream generated by the encoder.

LZ compression involves a sliding window and operates in blocks. The window represents the history available to pattern-match against. A block denotes a self-contained unit, which can be decoded independently of the rest of the stream. The window size and block size are parameters of the encoder. Larger window and block sizes imply better compression ratios, while smaller sizes require less calculation and working memory. The Durango hardware encoder and decoder can support block sizes up to 4 MB. The encoder uses a window size of 1 KB, and the decoder uses a window size of 4 KB. These facts impose a constraint on offline compressors. In order for the hardware decoder to interpret a compressed bit stream, that bit stream must have been created with a window size no larger than 4 KB and a block size no larger than 4 MB. When compression ratio is more important than performance, developers may instead choose to use a larger window size and decode in software.

The LZ decoder supports a raw throughput of 200 MB/s compressed data. The LZ encoder is designed to support a throughput of 150-200 MB/s for typical texture content. The actual throughput will vary depending on the nature of the data.

JPEG decoding

The same move engine that supports LZ decoding also supports JPEG decoding. Just as with LZ, JPEG decoding operates as an extension on top of the standard DMA modes. For instance, a title may decode from main RAM directly into a sub-rectangle of a tiled texture in ESRAM. The move engines contain no hardware JPEG encoder, only a decoder.

The JPEG codec used by the move engine is known as ISO/IEC 10918-1, which was the 1994 JPEG committee standard. The hardware decoder does not support later standards, such as JPEG 2000 (wavelet encoding) or the format known variously as JPEG XR, HD Photo, or Windows Media Photo, which added a number of extensions to the base algorithm. There is no native support for grayscale-only textures or for textures with alpha.

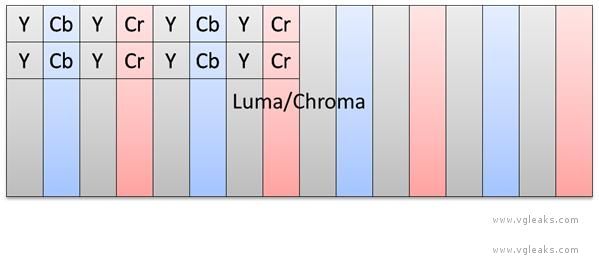

The move engine takes as input an entire JPEG stream, including the JFIF file header. It returns as output an 8-bit luma (Y or brightness) channel and two 8-bit subsampled chroma (CbCr or color) channels. The title must convert (if desired) from YCbCr to RGB using shader instructions.

The JPEG decoder supports both 4:2:2 and 4:2:0 subsampling of chroma. For illustration, see Figures 2 and 3. 4:2:2 subsampling means that each chroma channel is ½ the resolution of luma in the x direction, which implies a footprint of 2 bytes per texel. 4:2:0 subsampling means that each chroma channel is ½ the resolution of luma in both the x and y directions, which implies a footprint of 1.5 bytes per texel. The subsampling mode is a property of the compressed image, specified at encoding time.

In the case of 4:2:2 subsampling, the luma and chroma channels are interleaved. The GPU supports special texture formats (DXGI_FORMAT_G8R8_G8B8_UNORM) and tiling modes to allow all three channels to be fetched using a single instruction, even though they are of different resolutions.

JPEG decoder output, 4:2:2 subsampled, with chroma interleaved.

In the case of 4:2:0 subsampling, the luma and chroma channels are stored separately. Two fetches are required to read a decoded pixel—one for the luma channel and another (with different texture coordinates) for the chroma channels.

JPEG decoder output, 4:2:0 subsampled, with chroma stored separately.

Throughput of JPEG decoding is naturally much less than throughput of raw data. The following table shows examples of processing loads that approach peak theoretical throughput for each subsampling mode.

Peak theoretical rates for JPEG decoding.

Subsampling mode | Peak performance | Raw data rate |

4:2:2 | two 720p images/frame at 60 Hz | 2 × 1280 × 720 × 2 bytes × 60 Hz = 221 MB/s |

4:2:0 | two 1080p images/frame at 60 Hz | 2 × 1920 × 1080 × 1.5 bytes × 60 Hz = 373 MB/s |

System and title usage

Move engines 1, 2 and 3 are for the exclusive use of the running title.

Move engine 0 is shared between the title and the system. During the system’s GPU time slice, the system uses move engine 0. During the title’s GPU time slice, move engine 0 can be used by title code. It may also be used by Direct3D to assist in carrying out title commands. For instance, to complete a Map operation on a surface in ESRAM, Direct3D will use move engine 0 to move that surface to main memory.

![[Leak] Pre-release Version of Concord Surfaces Online](https://vgleaks.com/wp-content/uploads/2025/06/Concord-pre-release-150x150.jpg)